Sample Scenario-Based Tasks and Discrete Questions

Available Sample Tasks

Learn how students engaged with and performed on specific tasks. You can even try tasks yourself!

Collect images to be used on a website advertising a television show about the Andromeda Galaxy.

Develop an online exhibit about Chicago's water pollution problem in the 1800s.

Evaluate and explain how to fix the habitat of a classroom iguana.

Create website content to promote a teen recreation center.

Sample Questions From The Assessment

Examples of questions from the 2018 NAEP TEL assessment, including how students performed on them, are presented below. Each example contains a brief description of the question, the format type (selected response or constructed response), the content area, the practice, the measured skill, and student performance. The percentage of students who answered a selected-response question correctly or who received full credit for their answer to a constructed response question is also displayed.

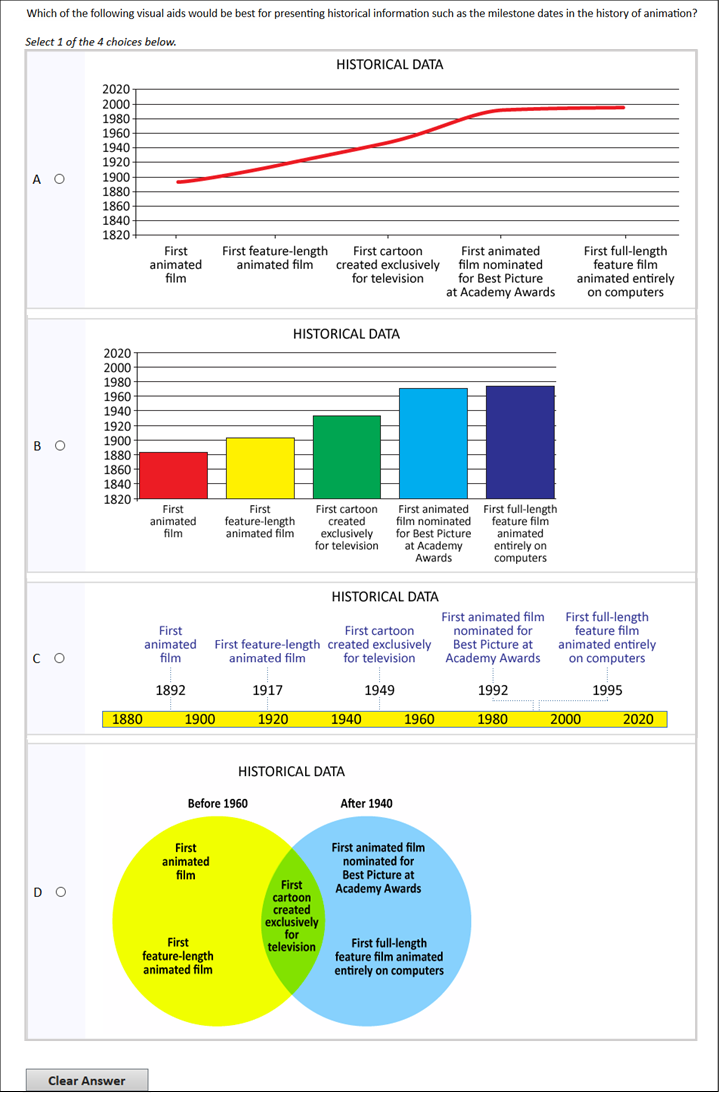

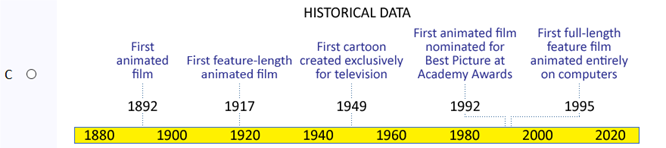

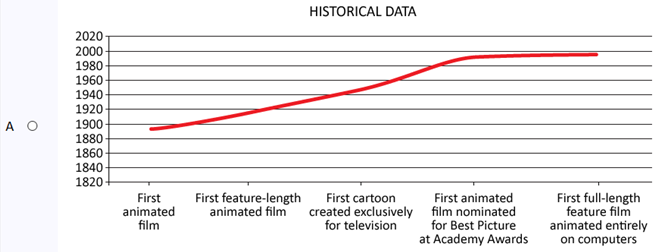

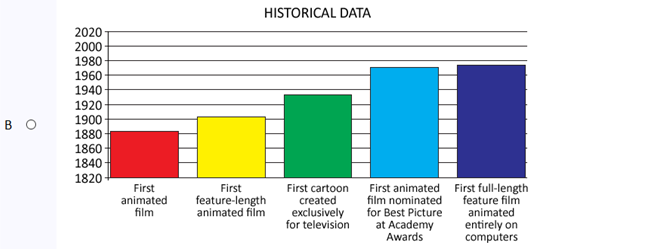

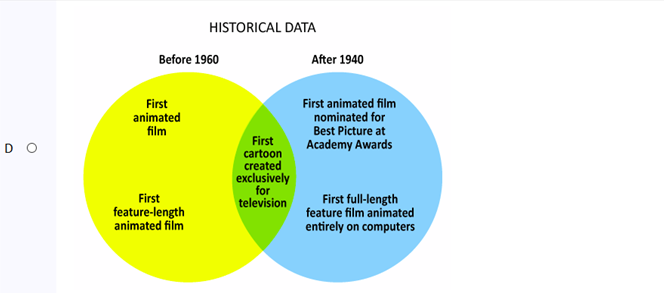

Select a data visualization (History of Animation)

Selected response

Information and Communication Technology

71% Correct

show

ShowExplain how a technological change might impact collaboration (School Election)

Constructed response

Information and Communication Technology

68% Correct

show

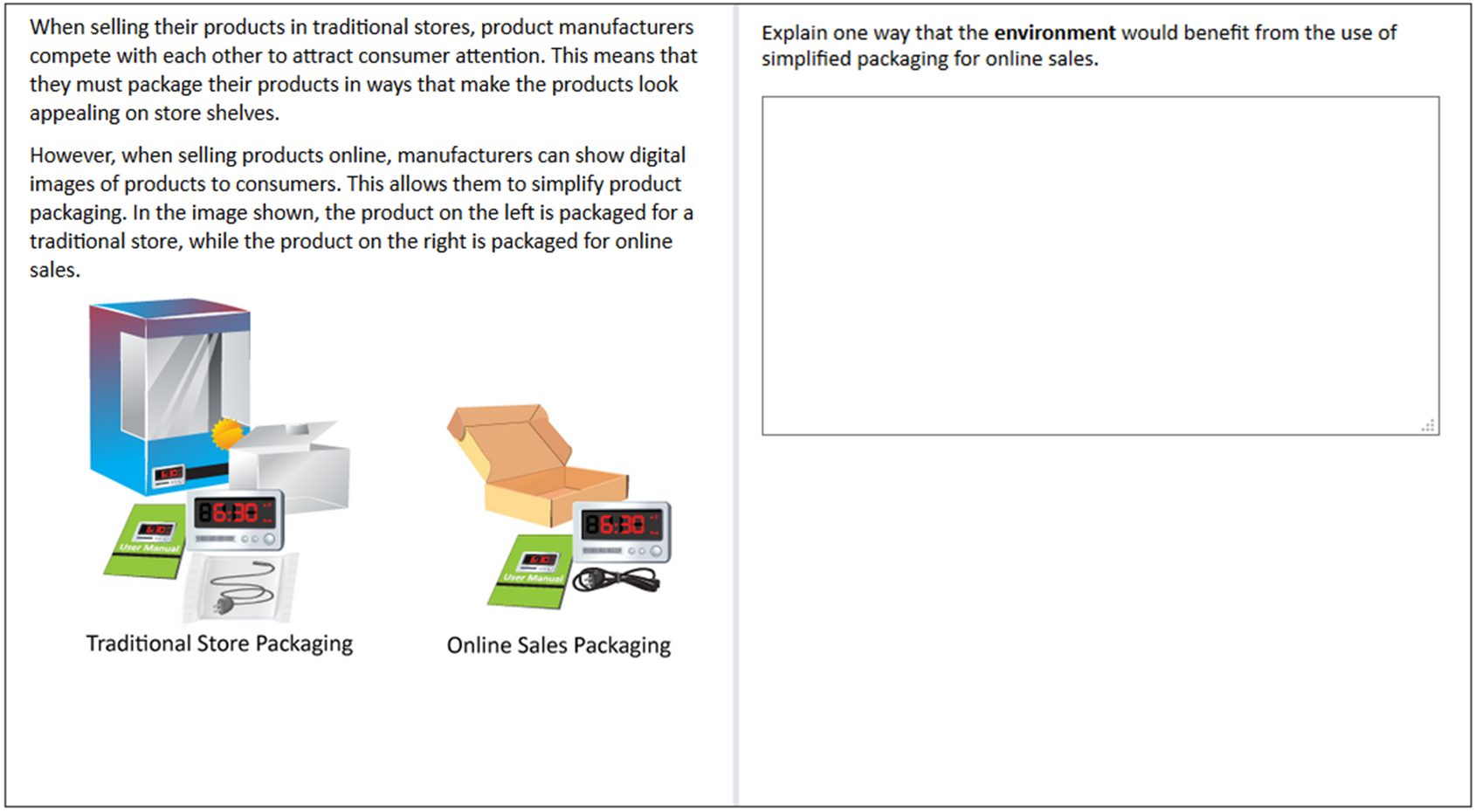

ShowDescribe the environmental benefit of a technological change (Plastic Packaging)

Constructed response

Technology and Society

63% Correct

show

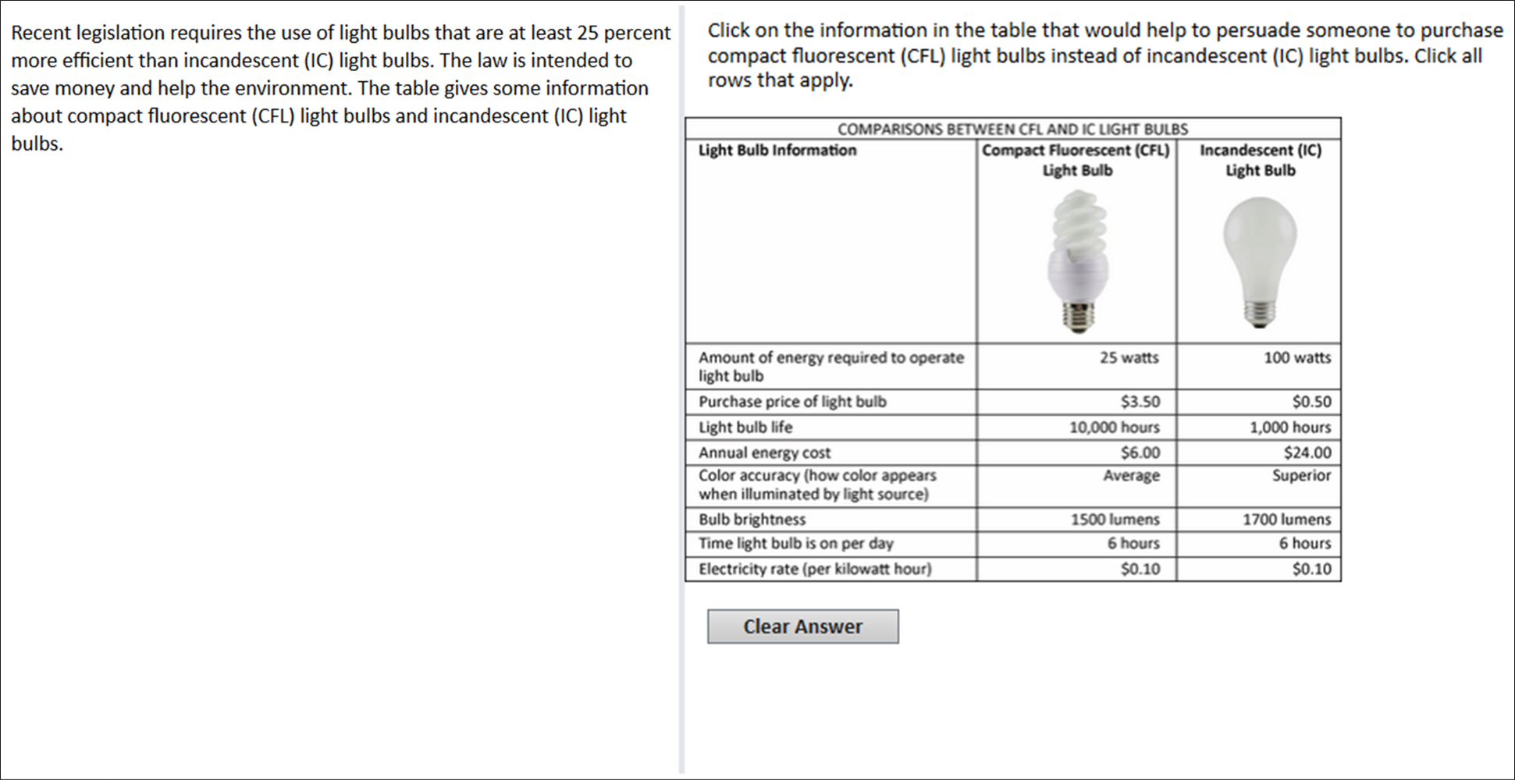

ShowProcess information needed for evaluating alternative technologies (Lightbulbs)

Selected response

Technology and Society

33% Correct

show

Show

In the NAEP TEL assessment, students were tested using computer simulations of technology and engineering problem-solving tasks set in a variety of real-world contexts. Through interaction with these multimedia scenario-based tasks, students used an assortment of tools and applied their TEL knowledge and skills to solve problems across the content areas and practices.

The Andromeda task was administered in the 2014 and 2018 TEL assessments. The tasks Chicago, Bike Lanes, Iguana Home, and Recreation Center were administered in the 2014 TEL assessment—these tasks were subsequently made available to the public and were not administered in the 2018 assessment.

Task features:

Learn more about tasks.

The TEL assessment also included interactive discrete questions that were not part of a scenario. See examples of discrete questions below.

Explore how selected items are mapped on the NAEP TEL scale by using Item Maps.